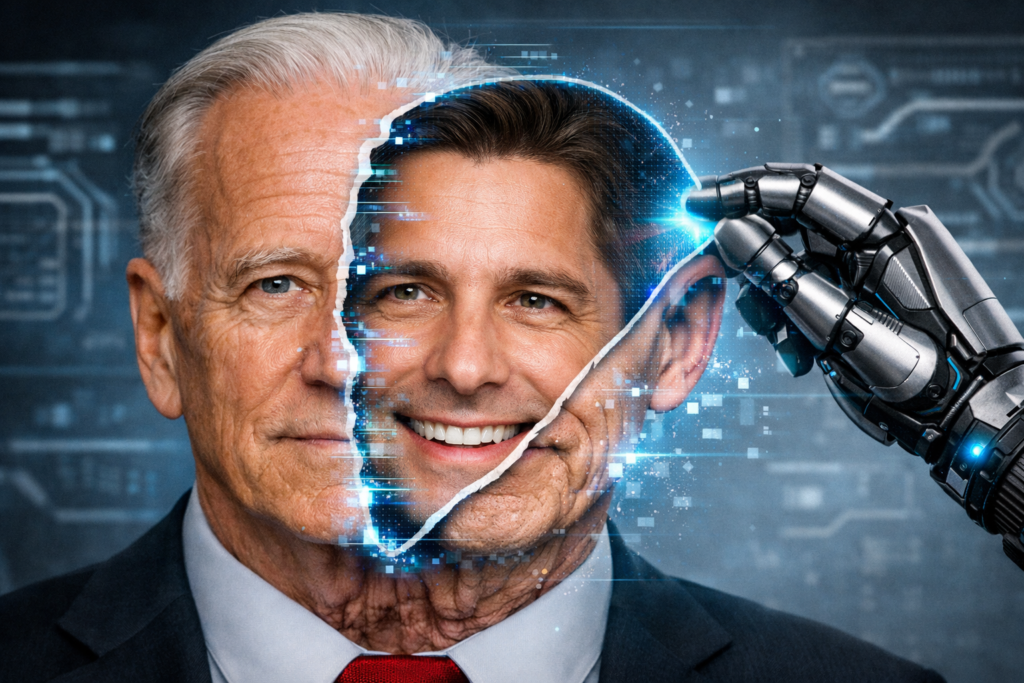

Not long ago, creating a convincing fake video or voice recording required serious technical skill. Today, widely available AI tools can clone a person’s voice in seconds and generate realistic video using just a handful of images. This shift has changed the threat landscape completely.

Cyber criminals are no longer limited to text-based scams. They can now look and sound like someone you trust, a colleague, a boss, even a family member and they are actively exploiting that advantage.

How Deepfakes are Created

Every deepfake starts with data. Attackers collect photos, videos, and voice samples from publicly available sources like social media posts, recorded meetings, webinars, interviews, or even casual voice notes. In some cases, they actively gather this material by calling targets and keeping them engaged long enough to capture usable audio.

The real risk appears when attackers gain access to private accounts. If someone frequently shares voice notes or videos, a compromised account becomes a goldmine for building highly convincing clones. Once enough data is gathered, AI tools handle the rest. These range from simple, automated bots to advanced platforms capable of generating realistic speech and facial movement.

Deepfakes are typically combined with social engineering, carefully designed scenarios that push people into acting quickly. A common tactic is to simulate a poor connection: a glitchy call followed by a short video or voice message. The low quality conveniently hides imperfections in the fake. The message itself is almost always urgent:

- an emergency,

- a financial problem,

- or a situation that demands immediate action.

And crucially, the solution usually involves sending money, not to the “person” you recognise, but to an alternative account controlled by the attacker.

More recently, real-time deepfake tools have made things even more concerning. Attackers can now manipulate live video calls, replacing their face and voice as the conversation happens.

How to Recognise a Deepfake

Even the most convincing deepfakes are not perfect. If you know what to look for, there are consistent signs that can expose them.

Visual signs

- Inconsistent lighting and shadows

Lighting often gives deepfakes away. Shadows may fall in the wrong direction, or the face may appear lit differently from the surroundings. Reflections on the skin can also look unnatural or missing entirely. - Distorted facial details

Pay close attention to the edges of the face, especially the hairline. Blurring, flickering, or strange colour transitions are common where the generated face overlaps the original footage. - Unnatural eye behaviour

Human blinking follows a natural rhythm. Deepfakes often get this wrong, blinking too much, too little, or unevenly. Eyes may also appear unusually still or “lifeless”.

If you’re looking at an image, reflections in the eyes are a useful clue. In genuine photos, reflections tend to match. In manipulated ones, they often don’t.

- Lip-sync mismatches

Even advanced systems struggle to perfectly align speech with lip movement. Small delays or awkward mouth shapes especially on certain sounds can signal a fake. - Background issues

The background may appear overly blurred, static, or disconnected from the subject. In some cases, it doesn’t move naturally with the camera.

Auditory signs

- Flat or synthetic tone

AI-generated voices can sound smooth but lack natural variation. If the voice feels unusually even or slightly artificial, be cautious. - Missing natural pauses

Real speech includes breathing, pauses, and small imperfections. Synthetic audio often skips or misplaces these details. - Abrupt cuts or odd phrasing

Words may run together, cut off unexpectedly, or carry unnatural emphasis. - Loss of personal speech patterns

Everyone has unique ways of speaking; specific phrases, accents, or habits. If those are missing or poorly imitated, something is off.

It’s also worth noting that attackers often deliberately reduce audio or video quality. A “bad connection” is frequently used to hide these flaws which in itself is a warning sign.

Behavioural signs (the most reliable)

Technology can imitate appearance and sound, but behaviour is much harder to fake in real time.

- Difficulty with movement

Ask the person to turn their head fully to the side. Many deepfakes struggle with angles outside a straight-on view, causing distortion or glitches. - Problems with spontaneous actions

Simple, unscripted actions can break the illusion. Deepfakes often fail to render these interactions correctly:

- waving a hand across the face,

- touching their nose,

- picking up an object nearby.

- Avoidance of verification

If the person resists simple requests such as switching platforms, making a regular phone call, or sharing their screen, treat it as a red flag. - Inability to answer personal questions

Ask something only the real person would know. If the response is vague, delayed, or incorrect, do not ignore it. - No knowledge of agreed safeguards

A pre-agreed codeword or phrase can be extremely effective. A legitimate caller will know it instantly. An attacker will not.

What to do if you suspect a deepfake

If something feels wrong, trust that instinct and act carefully.

End the interaction and verify independently: Stop the call or conversation and contact the person through a different channel, their usual phone number or another trusted platform.

Do not act under pressure: Urgency is a key part of these scams. Take a step back. A genuine emergency can withstand a few minutes of verification.

Alert the person involved: If the message appears to come from someone you know, inform them through a separate channel. Their account may have been compromised.

How to Reduce Your Own Exposure

Control what you share publicly: Reduce access to personal photos, videos, and voice recordings where possible.

Be cautious with app permissions: Avoid granting camera and microphone access to untrusted applications.

Strengthen account security: Use strong, unique passwords and enable two-factor authentication. Even if someone creates a convincing fake, strong account security can prevent further damage.

Prepare your inner circle: Make sure friends and family understand how these scams work. Agree on simple verification methods, such as a shared codeword for emergencies.

Use detection tools where necessary: There are tools that analyse media for signs of AI generation, but they should be treated as support — not a guarantee.

In conclusion, deepfakes work because they exploit trust, not just technology. The most effective defence is not technical expertise but awareness, scepticism, and a willingness to pause before acting. If something doesn’t quite add up, it probably isn’t what it seems.