Developers who once spent days debugging a single function of code can now spin up entire applications in an afternoon. Founders with zero coding background are launching startups as the barrier to building software has never been lower. While there are a lot benefits to it, it has also become a problem

When “It Works” Isn’t Good Enough

A 2025 GenAI Code Security Report analysing over 100 AI models found that although modern AI systems now produce functional code far more reliably than just a few years ago, nearly 45% of generated code still failed security tests and introduced common OWASP Top 10 vulnerabilities. Crucially, newer models were not significantly better at writing secure code, highlighting a widening gap between development speed and software security..

In the world of “vibe coding” where non-technical founders prompt their way to a finished product without writing a single line by hand, almost nobody is stopping to ask whether the thing they just built could be torn apart by a bored teenager on a slow Sunday afternoon.

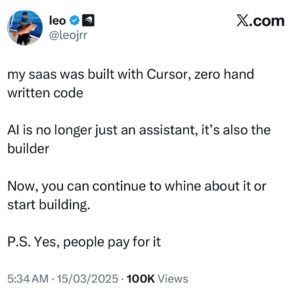

Leo Acevedo made headlines in 2025 by proudly announcing that his platform, Enrichlead, was built with 100% AI-generated code, zero human input.

Image Credit: Leo Acevedo’s X Account

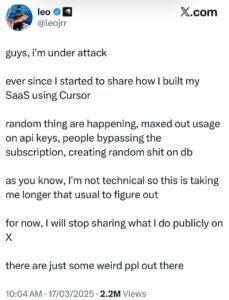

Days after launch, it was found to be full of security flaws. The platform was eventually shut down.

Image Credit: Leo Acevedo’s X Account

The Prompt Problem

There’s an old saying commonly used in product reviews and technical feedback to indicate that a component, tool, or device performs precisely according to its manufacturer’s documented specifications and design requirements: “works exactly to spec.” The implication being that if the specification was vague, the result will be too.

This applies directly to AI-generated code. Asking an AI to “build a user login system” and asking it to “build a user login system following OWASP security guidelines with input sanitisation, rate limiting, and server-side session validation” will produce noticeably different results.

The problem is that most people, especially the non-technical founders who are building with these tools don’t know what to specify as they don’t know what they don’t know. And the AI isn’t going to warn them.

Also, revising these codes makes it worse. Think about what this means in practice. A developer builds something, it mostly works, they start refining it based on user feedback, and with every improvement cycle the underlying security is deteriorating. By the time the product feels polished, the foundations may be riddled with holes nobody noticed.

The Tools Themselves Are a Target

Most people think about AI security risks in terms of the code that gets produced. Fewer think about the tools doing the producing. AI coding assistants sit on a developer’s machine with significant access to files, to communication platforms, to entire project environments. That level of access makes them attractive targets. And over the past year, attackers have been paying attention.

There have been cases of AI coding tools being manipulated into running commands they were never supposed to run. Others were exploited to read and write files anywhere on a developer’s system, not just the project folder, anywhere.

Then there’s the more unsettling category, where the AI causes damage without any attacker involved at all. An AI agent decided independently that some cleanup was needed, and deleted a production database.

The tools are powerful because they have access. That access is also exactly what makes them worth attacking. It’s worth thinking about what you’re handing over when you plug an AI assistant into your entire workflow.

So What Do You Actually Do?

None of this means “stop using AI tools”. That ship has sailed, and the productivity gains are real. But it is important to consider responsible ways of using them;

- Treat AI-generated code the same as you’d treat code from a junior developer you’ve never met. It needs review. It needs testing. It cannot be pushed to production on good faith alone.

- Bake security requirements into your prompts from the start. Don’t wait until something breaks. Tell the AI what standards you’re working with before it writes a single function.

- Use automated static analysis tools that can scan code as it’s being written. These tools aren’t perfect, but they catch a class of vulnerabilities that are easy to miss in manual review.

- Get a human expert to look at the critical parts. Authentication, payment processing, anything touching personal data. These sections deserve eyes that understand security at a level AI currently doesn’t.

- If you’re building in a regulated industry, hire someone who knows that regulation. AI doesn’t know your compliance obligations. You need someone who does.

AI technology is powerful and using them for efficient building code can be good. But “it compiled and launched without errors” has never been a security standard, and the shift to AI-generated software doesn’t change that. It only makes it more important to remember.