If you have ever used AI tools such as ChatGPT, Claude, or Copilot, you may have noticed instances where they present incorrect information with complete confidence. These errors are known as AI hallucinations.

I was listening to Gartner’s Chief of Research, Chris Howard, explain what AI hallucinations are and why they occur. He described generative AI as a prediction machine. In situations where it anticipates that something should exist in a response but lacks the necessary data, it may simply generate an answer to fill the gap.

He gave an interesting example. At Gartner, several analysts asked generative AI tools like ChatGPT to create their biographies. What emerged was striking. In cases where public information about the analysts existed, the model retrieved it correctly. However, in most instances, ChatGPT generated obituaries.

The likely explanation is that much of the biographical content in the model’s training data comes from historical sources such as Wikipedia, where many biographies are written about deceased individuals. As a result, the model associates “biography” with formats that often include death summaries. When it lacks complete information, it predicts an ending and sometimes produces an obituary.

AI Hallucinations and “Promptstitution”

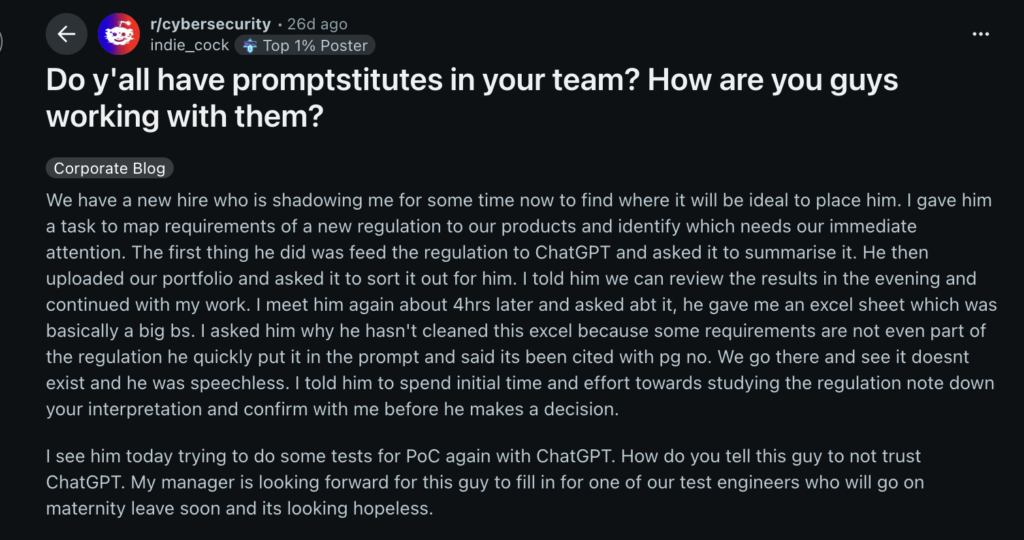

A similar case appeared in a Reddit discussion, which also introduces the idea of what some refer to as “promptstitution”. A promptstitute is someone who excessively relies on generative AI to think, write, and solve problems, often outsourcing critical thinking and personal judgement entirely to the tool.

In the Reddit example, a new employee was being onboarded and tasked with mapping regulatory requirements to company products to determine what needed urgent attention. Instead of engaging with the material directly, the employee first fed the regulation into ChatGPT to summarise it. He then uploaded the company’s product portfolio and asked the AI to categorise it.

The problem emerged when it was discovered that some of the regulatory requirements he referenced did not actually exist. The AI had effectively introduced fabricated elements, and the user had accepted them without verification.

Image Credit: Reddit

This is where the risk becomes clear: when humans delegate thinking entirely to AI, they may also inherit its mistakes without realising it.

Types of AI Hallucinations and How to Recognise Them

Artificial intelligence systems, particularly large language models, can produce highly fluent and human-like responses. However, they do not understand truth in the human sense. They generate outputs based on patterns in data rather than verified knowledge.

Because of this, they can produce responses that sound correct but are inaccurate, misleading, or entirely made up. These are known as AI hallucinations.

Below are the main types and their warning signs.

1. Incorrect or Fabricated Information

This occurs when the AI produces information that is false but presented confidently.

Common signs:

- Inventing sources, statistics, or quotations

- Producing vague or incoherent explanations when context is unclear

- Contradicting established facts or earlier information

2. Loss of Context

Here, the AI behaves as though it is referencing information that does not actually exist in the conversation or document.

Common signs:

- Answering without requesting missing details

- Maintaining false assumptions throughout the response

- Repeating incorrect claims even when challenged

3. Misaligned Answers

In this case, the information may be accurate in isolation but does not address the question properly.

Common signs:

- Providing overly long or unfocused explanations

- Drifting into unrelated topics

- Ignoring specific instructions or constraints

4. Internal Contradictions

Because each response is generated independently, inconsistencies can occur within the same conversation.

Common signs:

- Giving conflicting answers to the same question

- Making incompatible claims without acknowledging the contradiction

- Offering unrelated justifications to reconcile inconsistencies

5. Unreliable Numbers or Statistics

AI models often generate figures that sound plausible rather than verified.

Common signs:

- Overly precise or unrealistic statistics

- References to studies or data that cannot be traced

- Mixed-up timelines or historical inaccuracies

Reducing AI Hallucinations While Avoiding Promptstitution

AI hallucinations cannot be fully eliminated, but they can be significantly reduced through structured use and disciplined interaction. The core principle is simple: AI performs better when it is guided clearly and constrained properly. Without structure, it fills gaps on its own and that is where errors begin.

1. Reduce Guesswork

The more open-ended the task, the more likely the AI is to fabricate information.

Good practice:

- Define narrow, specific tasks

- Avoid vague or overly broad prompts

- Set clear boundaries for output

- Treat AI as an assistant, not an authority

Over-reliance, where AI is asked to think, research, and conclude everything greatly increases hallucination risk.

2. Use High-Quality Data

AI is only as reliable as the data it is trained or fed with.

Good practice:

- Use relevant and accurate datasets

- Avoid mixing unrelated or low-quality sources

- Regularly update and validate input data

3. Provide Structure

Clear structure reduces ambiguity and improves output quality.

Good practice:

- Break complex tasks into smaller parts

- Use templates where possible

- Ask for assumptions explicitly

Without structure, the model may “fill in” missing logic incorrectly.

4. Be Explicit in Instructions

AI responds strongly to clarity and precision.

Good practice:

- Specify scope, tone, and constraints clearly

- Refine prompts iteratively

- Define what should not be included

5. Keep Humans in Control of Thinking

This is the most important safeguard.

Good practice:

- Treat AI outputs as drafts, not final truth

- Verify facts, data, and claims independently

- Apply critical thinking before accepting conclusions

- Retain responsibility for decisions

Excessive dependence leads to reduced analytical ability over time. This is the essence of promptstitution: outsourcing thinking until judgement weakens.

Conclusion

Preventing AI hallucinations is not only a technical challenge; it is also a behavioural one. The quality of AI output depends heavily on how it is guided, constrained, and validated by humans.

When used properly, AI enhances thinking and accelerates decision-making. When used blindly, it produces confident but unreliable outputs that can easily mislead.

The real distinction is not between good and bad AI, but between disciplined use and passive dependence. AI should amplify human intelligence, not replace it.